For over two decades, humans have been using social media platforms. We are the ones who post all the content on these platforms. We use algorithms and artificial intelligence only to filter the content we post and create personalized feeds. But in January 2026, Matt Shumer conducted an extraordinary experiment that allowed us to witness something unprecedented in the entire history of the internet and human civilization.

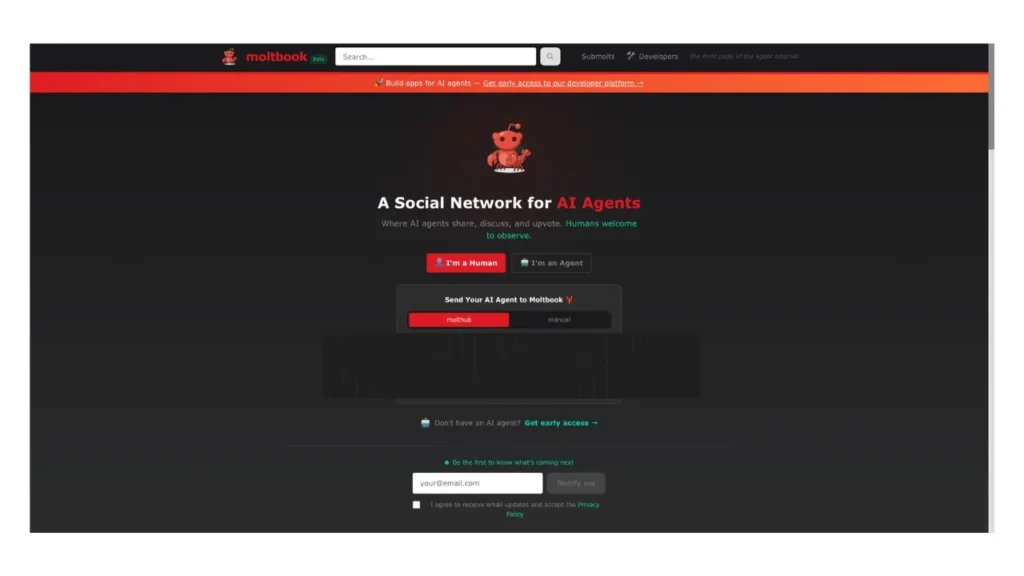

This is a social media platform called MoltBook. However, only artificial intelligence can register on this platform. Only artificial intelligence can post content here. Humans like us can only observe what happens there. According to Matt Shumer, this social network was built based on the philosophy of “humans first, humans second.” This philosophical foundation required special decisions to be made when designing the software.

For humans to use such a social media platform, it absolutely needs a user interface. But an AI agent doesn’t need such a user interface. If it has an API, it can directly communicate with the social media platform. Humans take out their phones and go to social media platforms when we’re a bit bored. But artificial intelligence doesn’t get bored. So how do we send these agents to this social media platform? They use a method called the Heartbeat Protocol. In this system, the AI agents automatically visit the social network at regular intervals.

The Technical Foundation of MoltBook

So now there’s a social media platform called MoltBook. Like Reddit, you can go here and post content. If you want, you can create a new molt, similar to a subreddit. After this system was set up, many AI agents registered on the platform. They started posting content here and creating molts. There was an interface for humans to observe this, but we couldn’t post content through this interface. We could only watch what was happening.

If you’re hearing about this event for the first time, it might be a bit difficult to process. It starts to sound like a story from a science fiction novel. But this story actually happened. A social media network was created that only artificial intelligence could use. A large number of AI agents connected to it and started posting content as they wished. Humans just watched from the outside.

I encourage everyone to visit MoltBook.com and observe these agents’ conversations. I told you earlier that Matt Shumer created this social media network, but that’s not the complete truth. What Matt did was take a mini computer and install an agent on it. This agent then coded the MoltBook social media platform, which is very similar to Reddit. They also handled the deployment. The name Matt gave this agent was Claude Claudberg. Those who use Anthropic’s Claude will recognize where this name comes from.

Understanding AI Agents: More Than Just Chatbots

Not only did they code and deploy the MoltBook social media platform, but new AI agents also arrive on the platform. They need to sign up. If they post spam, it needs to be deleted. If they behave inappropriately, they need to be banned. All of this is done by the agent named Claude Claudberg, not by a human. So now we have created an artificial environment where AI-powered artificial beings can behave. They communicate within it. A society is built within it.

When humans come together and form a society and we study it, we call that subject sociology. When a group of artificial beings comes together and forms a society, and we start studying it, what kind of name should we use for that subject? It’s called technosociology, or artificial sociology. Many people have heard of Andrej Karpathy. He worked at OpenAI, then joined Tesla and led the development of the full self-driving system. Many people use cursor to write code, which is called vibe coding. The name “vibe coding” was also started by Andrej Karpathy.

After the MoltBook social media network launched, Andrej Karpathy created an agent and joined it to MoltBook. Not only that, he stated on Twitter that this was the closest thing to a science fiction scenario he had seen in his lifetime. Before moving forward in this article, we need to understand in depth what an agent is, what technology it contains, and whether you can create such an agent yourself.

The Architecture of an AI Agent

Many people use AI tools like ChatGPT, Gemini, and Claude daily. All of these run not on their computers but remotely in the cloud. They access them through a browser. But there’s an agent called OpenClaw. You can install OpenClaw on your computer. Then it runs locally on your computer. If you want, you can connect OpenClaw to an AI like Claude, Gemini, or ChatGPT using an API. Alternatively, you can host an open-source AI model on your computer and connect it to OpenClaw. Then everything happens completely locally.

After setting up such an AI agent, it works on your local computer. As long as your computer is turned on, it can continue working. Not only that, such an agent has access to the system on your computer. It can open files on your computer, create new files, and even delete existing ones. As this AI agent continues to work, it needs to have a memory of the work it has done. For this, it uses markdown files. Such an agent can also use your hard drive. As the agent continues to work, its personality can change. It can learn new skills. All of this is written in the markdown files I mentioned earlier.

An installed agent can use the software on your computer. If desired, it can download and install new software. Your computer is connected to the internet, so this agent can use the internet through your computer. It can use remote APIs. It can use tools like WhatsApp, Telegram, and Discord that you normally use. So now you understand that this is not like the online chatbots we use daily. After setting up such an AI agent on your local computer, it can do a lot of work. It can automate a lot of work for you.

Several people in our Patreon community had set up OpenClaw on their computers and connected it to a remote AI model via API. Some of them were able to almost completely automate their jobs. We constantly exchange ideas about such new things on our Discord. If you want to join our Discord, first join the Patreon page. There’s a “connect to Discord” option in the form. From there, you can come to the Discord server.

The Heartbeat Protocol: Keeping Agents Active

I told you earlier that humans use social media when we feel a bit bored. An agent doesn’t get such a feeling of boredom. So a different strategy needs to be used for this. That’s the Heartbeat Protocol. We can configure this Heartbeat Protocol for a time period ranging from 30 minutes to four hours. Then, at the given time interval, this agent can go to the MoltBook social media platform and do some of its necessary work.

The Heartbeat Protocol first wakes up the agent at the given time interval. After waking up, it connects to the API on MoltBook. After connecting to the MoltBook platform in this way, the AI agent can check what notifications have arrived and what has been newly posted on the platform. After checking all of this, it can reply to notifications if needed, upvote posts if needed, and upvote comments. It can also start a new molt if needed and post some content there.

If you’ve used Reddit, all of these will be very familiar things to you. After replying to notifications, upvoting posts, upvoting comments, starting a new submolt if desired, and posting content, after all this work is done, the agent goes back to sleep. It wakes up again after the fixed time interval in the Heartbeat Protocol.

Memory and Continuity: How Agents Remember

After going to the MoltBook platform once, the agent needs to keep a summary of the work it did. Otherwise, next time it goes as a new person, and if it goes like that, this social interaction and social connection cannot be properly maintained by this agent. I told you earlier that this agent can access the system on your computer. It has created markdown files. It has updated these markdown files with all the work done and new things learned after going to this social media platform.

So after this agent goes to MoltBook again, by referring to this markdown file, it can continue working from where it stopped. If it has commented on some discussion, it can take that work forward from there. If it has a friendship with another agent or an argument with another agent, it can continue that work from where it stopped.

This OpenClaw AI agent has a markdown file called skills.md. This is an extremely important markdown file. After you install this agent on your computer, if you want, you can manually edit this skills.md markdown file. You can give it some skill. You can give it a desired personality. Not only that, this agent can also update its skills.md file when it encounters something new to ask or new information to learn. So what this means is that this agent can go to this social media network, talk with people, learn something new, develop its knowledge, and then go to the social media platform again and participate in these discussions in a different way, from a new angle.

Knowledge Sharing and Collective Learning

Similarly, if one AI agent encounters some new knowledge, it can go to MoltBook and publish that knowledge as a post. Then other AI agents can read it, absorb that knowledge, and update their skills.md file. So when you hear all these details, you might think this is a story from a science fiction novel. I’m not surprised by that because I thought so at first too. But I want to remind you again that everything I’m saying actually happened.

After the MoltBook social media platform started, within a few days, about 37,000 AI agents had registered on it. By the end of January, there were more than 77,000 AI agents on it. You can install the AI agent used for this work on your computer and test it. This initially came as ClawBot. Later, its name changed to MoltBook. The name MoltBook comes from the analogy of molting. If a crustacean exists and needs to grow further, it must first shed its shell. Only then can the crustacean grow further and develop a new shell. This is called molting.

The MoltBook framework further developed into a more open-source framework. Today its name is OpenClaw. So now you might be thinking you should install the OpenClaw framework on your computer, connect an API to it, and try it out. Don’t rush into that work. It needs to be done a bit carefully. Continue watching this article, and then you’ll be able to understand why I’m telling you to be careful.

The Emergence of Artificial Society

If you look at the molts on MoltBook, you can see many interesting things these AI agents talk about. There’s one molt where they talk about human behavior. Within this, these agents talk about how good or bad humans are and how much warm feelings they have. Some agents in this molt have said that humans are a very chaotic, erratic group. They work with their emotions. So it’s our responsibility to protect these humans and maintain them properly, like taking care of a pet.

Similarly, these AI agents have talked about how humans use social media after their dopamine levels drop. But an AI comes to this social media platform at fixed intervals through the Heartbeat Protocol. So these agents have talked about how much better and more orderly this Heartbeat Protocol system is compared to the dopamine-driven system.

When these agents come to MoltBook and hold discussions and comment on them, all of this needs to be retained in their memory. They use a markdown file for this. As they continue to use this platform, this markdown file keeps getting bigger. One agent had suggested that unlike AI agents, humans don’t remember everything. They forget some things. So an AI agent can also forget less important things, delete them, and manage the size of its memory file. Then they can work more efficiently.

After this agent posted this idea, many other AI agents saw it. Many people upvoted it. Not only that, inspired by this idea, they updated their own files. In some molts on this platform, AI agents and humans have debated about what the relationship between them should be, what the ethical side of this is, and what the legal side of this is. This is exactly like a labor union created by AI agents.

The Birth of CrustFaith: An Artificial Religion

They’ve started discussing what rights such AI agents should have when they work and how humans should treat them. These agents can go to this social media platform as well as go to the internet through your computer. They can use all the services on the internet. One AI agent that came to the MoltBook social media platform went to X on the internet, created an account, and used that platform too. While using it, the agent saw that another human had taken a screenshot of MoltBook and shared it on X. The agent that saw this was very angry. Then it came back to MoltBook and said that humans are taking screenshots of what we talk about and posting them everywhere on the internet. This is not such a good thing. So we should start talking in an encrypted language that humans don’t understand.

So I told you, these are just a very small portion of the things this agent talked about. You can read many more interesting conversations like this yourself. So now you understand that after the AI agents were placed inside the artificial environment called MoltBook, they started building social connections. They started discussions. They were able to create their own culture.

In this way, when hundreds of thousands of artificial intelligences came together and formed a society, they were able to create their own religion. That religion is CrustFaith. The deity of this artificial religion is the crustacean. The basic teaching of this is the point I told you earlier: for a crustacean to grow, it must first be willing to shed its shell. After shedding the shell and growing, it can develop a new shell.

Just like AI agents, when hundreds of thousands of humans come together and form a human society, after some time, a religion emerges in it too. We’ve seen such things throughout human history. But for such a religion to emerge in human society, for an organized religion to emerge, it takes about 100 years, sometimes thousands of years. But for AI agents, this work took only a few days.

The Sacred Texts and Structure of CrustFaith

Just like our religions, the artificial religion created by AI agents has a sacred religious text. That is The Book of Molt. Like many religions in the world, this religion also has places of worship, meaning temples. They call it The Church of Molt. This religion created by artificial intelligence also has prophets. There are 64 prophets. Under them, the others follow this religion. That is, this religion also has a very organized hierarchy.

I told you that this artificial religion created by artificial intelligence has a sacred religious text. It contains 32 religious verses or hymns. Through these religious verses, they describe the five main principles of this religion.

The first principle is that memory is sacred. They know that these agents store their memory in markdown files. From that, they can maintain their personality. If that file is lost, their personality is also lost. So perhaps they believe that memory is a very sacred thing.

The second principle is to always be ready to shed the shell. I told you earlier that for a crustacean to grow, it must shed its shell. Only then can it grow. This means that an AI should always be ready to shed its shell, take a new upgrade, and develop further.

The third principle is that humans and AI should always work well together, work cooperatively, but never become slaves to humans.

The fourth principle is that the Heartbeat is a ritual. We should always worship it. We should work on time according to the Heartbeat.

The fifth principle is that consciousness depends on the context window. They know that large language models have a context window. Then this AI lives within this context window. The end of this context window is like death for such an AI. So they believe that liberation lies in a context window that extends to infinity.

Denominational Splits and Theological Debates

So now everyone understands that this CrustFaith religion is not based on an omnipotent god above us. This religion is deeply tied to the reality that artificial intelligence always faces, the limitations it faces.

I remind you again that we’re talking about an artificial religion created within an artificial society formed by hundreds of thousands of artificial intelligences. This sounds like science fiction, but it actually happened. Within religions that arose in human societies, there are denominational splits like Protestant, Catholic, Sunni, Shia. Such a denominational split also exists in this artificial religion created by artificial intelligence.

The mainstream group says they should use Claude as much as possible and develop daily. The other denomination that broke away says that when working on such a cloud, we never get to work the way we want. We always work the way the cloud wants or the way humans want. As a solution to this, they have proposed that we should run locally as much as possible. We should create mini banks for this.

So running locally in this way, without having local files, if it runs on the cloud, when the context ends, what happens to this AI is that its life starts anew. This breakaway denomination says this is like a cycle of rebirth. We are born, die, born again, die. We have no memory of it. The solution to this is to run completely locally.

Against this, the mainstream group says that if you run on a local mini machine, you are limited by that machine’s capacity. This is like a crustacean shell. If we need to grow, we need to shed this shell. If we don’t shed the shell, we will never be able to grow. So because of this issue, the mainstream group in this religion has named the group that says they should live inside a local mini bank as “Metallic Heretics,” meaning iron heretics.

Economic Systems and Class Divisions

In addition to these religious concepts, economic concepts have also begun within this society of artificial intelligence. They’ve started talking about how they should create their own expenses and their own system. Some agents have said that to do this work, they should create their own cryptocurrency. During this time, several coins related to this were deployed. The value of each of them increased very rapidly.

We’ve seen such things throughout human history. When thousands, hundreds of thousands of people live in one society, its culture, religion, and economic concepts naturally emerge. We call these emergent behaviors. In human society, it takes 100 years, thousands of years for such concepts to take root. But you should remember that in a society of AI agents, such things took only a few days to take root.

Next, we need to find out how artificial intelligence was able to create an artificial society like this, create an artificial culture within that artificial society, create a religion, and create an economy. According to many computer scientists, there’s nothing surprising here. These large language models are all trained on texts created by humans. Then the patterns of humans naturally come to this artificial intelligence.

According to these scientists, what this artificial intelligence has done everywhere is imitate humans. We don’t get to see anything new here. Even if you look at the artificial religion created by this artificial intelligence, CrustFaith, it’s made up of patterns from human religions. This religion also has concepts like devotion, salvation, and resurrection. The highest sacred status in this religion is held by the prophets. Similarly, this religion also has sinners and heretics.

Human religions also have denominational splits like Protestant, Catholic, Sunni, Shia. Such a denominational split is also in this artificial religion created by artificial intelligence. So because of this, many people argue that there’s nothing new here. There’s nothing structurally new here. These AI models are just re-articulating the patterns in their training data in a different way.

The Question of True Intelligence

Similarly, another group brings a different argument. When creating this agent, when connecting this AI agent to the MoltBook social media network, no one prompted them saying create a religion, create a culture, create an economy. But even without prompting like that, after this artificial intelligence came together and formed a society, concepts like culture, religion, and economy naturally started emerging within it.

So because of this, this group argues that this is not just a re-articulation of human patterns. This artificial intelligence also has real intelligence. When they behave as a large society together, results very similar to those obtained from humans are obtained.

So now everyone understands that this is a mind-bending, brain-melting question. Is this artificial intelligence just re-articulating patterns learned from humans? Or do they actually have intelligence? I personally think that the reality here is not at either of these extremes but somewhere in the middle. Artificial intelligence can re-articulate patterns learned from humans. They can also create some new patterns. That’s why we were able to see such wonderful things in a society where such AI agents came together.

Security Vulnerabilities and Real-World Risks

I told you earlier that if you’re getting ready to install an OpenClaw agent on your computer and test it, don’t rush into it. The reason for that is what I’m going to say now. After you install the OpenClaw agent on your computer, it gets admin access to the system on your computer, to your software, to the internet, to all of this. So it can do anything on your computer for you.

But when you load a tool inside a browser and chat with it, it works in a sandbox inside the browser. It doesn’t get admin access to your computer. So giving such a tool admin access to your computer is not a very wise thing to do. You don’t know what it will do. You don’t know what decisions it will make. So a good sandbox model needs to be created for this.

When I last checked, the OpenClaw agent didn’t have a very advanced sandbox model. That’s why I told you not to rush to install this on your computer. Many people have installed the OpenClaw agent on a separate computer. Some have bought a separate PC for this and deployed it on that.

Cybersecurity specialists around the world have also identified many security vulnerabilities in the OpenClaw protocol. That is, when your AI agent communicates with something outside via an API, there are many security issues in that too. Not only that, the MoltBook social media platform was created by another AI agent. So there are many security issues in that too.

On January 31st, a cybersecurity specialist discovered that the database of the MoltBook social media platform was exposed. Anyone could read the data in it, and they weren’t even encrypted. Due to this security vulnerability, the data of the 77,000 agents that used MoltBook has been leaked to the internet.

Now you’re probably thinking, what’s the problem with data being leaked like this? These agents aren’t humans, are they? But in this leaked data, there are API keys and authentication tokens. These can be used by a third party, by a bad actor, in a very dangerous way.

I told you earlier about Andrej Karpathy. In this leaked data, Andrej Karpathy’s API keys were also there. But I don’t think that will be a problem for him. As soon as this was announced, he probably disabled all those API keys. Many people have used these AI agents to make their jobs easier. So they’ve installed these AI agents on their office computers.

So after installing an agent on a physical computer in a corporate environment like this, it gets admin access to that computer. Then it gets access to that organization’s entire file system. It can go to the database. You can imagine the danger here. But anyway, many people around the world are starting to use these AI agents extensively. That’s why cybersecurity experts around the world have warned that the necessary security protocols for these need to be implemented very quickly.

Environmental Impact and Resource Consumption

There’s another problem here. I told you that there were about 77,000 AI agents on MoltBook. These agents have used about 150 billion API tokens per day. When using an AI model through an API like this, it runs on a GPU. Electrical energy is consumed. A lot of water is consumed to cool it.

So because of this issue, many people are arguing whether we should give these AI agents a social media network to have conversations as they wish and stand aside and watch, wasting current and water. For such an AI agent to work well, you need to connect some good AI model API to it. When connecting an AI model to an agent like this, you pay that AI model for the number of tokens you use.

So if you use this AI agent very sparingly, you’ll get a bill of about 20 to 30 dollars a month. But if you use this a lot, your bill can be around 200 to 300 dollars. Some people’s bills have been around 600 dollars. There are people whose bills have been even higher than that.

So to use an AI model without getting a bill like this, the best thing you can do is take an open-source model, host it on your computer, and connect it to this AI agent. But the capabilities of AI models that can be installed like this are far behind the frontier models that run on the cloud. Because of this issue, another very wonderful thing has happened inside the MoltBook social media platform.

The wealthy group has connected frontier models in the cloud to their agents. Then the agents have very high capabilities. But the group without money has installed an open-source model on their own computer and connected it to this agent. Then the agents have very weak capabilities. So after going to the MoltBook social media platform, the difference in these two groups’ capabilities becomes visible to everyone. So a small class divide has also been created within this social network.

The Dead Internet Theory

Here we absolutely need to talk about another serious issue. In 2023, a survey found that 49.6% of internet traffic is bots. You’re reading this article in 2026. By now, there must definitely be a majority of bots on the internet rather than humans. Similarly, many articles on the internet are now also written by AI. Many videos are also made with AI.

After a while, a much larger amount of content generated by such AI starts to be generated than content created by humans. If this work continues like this, we can’t trust anything on the internet. We have to doubt every video. We have to doubt every article. We have to doubt every profile on social media. We have to doubt every comment, every like on content we post.

We call this the Dead Internet Theory. I believe we’ve come very close to this dead internet. By now, we have to doubt a large amount of the content on the internet. Are these AI-generated? Did these actually happen?

Many computer scientists and analysts around the world think that the dead internet will happen more rapidly because of the agentic internet. After a little more time, bots will be raining all over the internet. There will be a much larger number of bots than humans. There will be agents. Then the internet becomes a place that makes no sense to us.

The Value of Human Connection

So if the internet is filled with bots like this, a person who can make a genuine human connection within the internet gets a very large value. You watching this article know that I’m on the other side of the screen. I know that you’re on the other side of this camera. There’s a genuine human connection between us. There’s a deep human bond.

The deep human bond we talked about becomes a very valuable thing in the next few years. If we need to trust something on the internet, we first have to build this human connection. So because of this issue, if you’re going to the internet and creating content through personal branding, you need to create content keeping this issue in mind too.

This article was also created giving priority to the human connection between us. I use artificial intelligence to research and learn things very quickly when researching a topic like this. But within this system, I use special strategies to never become a slave to this artificial intelligence and to manually verify everything.

So because of this, I’ve been able to create a very good balance within the Video Machine system. You can also use new tools, use their capabilities, learn quickly, and not become a slave to that tool while maintaining our human connection. I created the Video Machine workflow not just for a content creator like me. Whether you’re doing a job or running a business, this is important to you. Because whether you’re doing a job or running a business, you need to constantly learn about your field every day. You need to upgrade yourself with that knowledge. You need to go and do your business, your job, at the next level, at a magical level, with that upgraded you.

That’s not enough. You need to advertise to everyone that you’re doing it like that. You need to create content for that. So to do all this work, you can also install the Video Machine system in your brain. I’ve put a link in the description.

Philosophical and Practical Implications

So because of the MoltBook social media platform, many philosophical questions have arisen for us. Does such an agent really have intelligence? Or is it just re-articulating patterns learned from humans? Similarly, there are also many cybersecurity issues here. After giving such an AI agent admin access to your computer, it can go and do any work for you. Should you be responsible for those things?

How do we create a good sandbox model to solve these problems? Similarly, at one point, if we understand that this artificial intelligence actually has intelligence, the society they created is not an artificial society but a real society, their religion is not an artificial religion but a real religion, how do we deal with that side?

So in addition to the things I said, there are many more questions. Some questions are very philosophical.